The Lange Nacht der Forschung 2026 (long night of research) turned out to be a truly special evening — one that once again demonstrated how powerful it can be to bring science and research closer to the public. Thanks to the remarkable engagement, creativity, and enthusiasm of everyone involved, complex ideas were transformed into hands-on experiences for a broad and diverse audience.

With more than 9,000 visitors across the Lakeside Science & Technology Park and the University of Klagenfurt campus, the event was a great success. Each individual station contributed to making research tangible, interactive, and inspiring.

Strong Presence of Our Department

Our department was proudly represented with six stations/booths, four of which were hosted by our lab. Together, they showcased cutting-edge research in multimedia, artificial intelligence, and interactive systems, thus demonstrating both scientific depth and real-world impact.

Highlights from Our Lab

At our lab’s four stations, visitors had the opportunity to explore current research in an engaging and interactive way:

Detecting Damage in Wind Turbines with AI (L25)

How can we inspect wind turbines without shutting them down? This station introduced the DORBINE project, where AI-powered drone swarms are used for automated inspection. A two-meter model vividly demonstrated how such intelligent systems could reduce costs and downtime while improving energy efficiency.

Making 3D Video More Realistic (L26)

Visitors were introduced to 3D Gaussian Splatting (3DGS), a next-generation 3D video technology that enables highly realistic rendering of scenes with reduced data requirements. Through hands-on interaction, they experienced how real-world environments can be captured and reproduced as immersive 3D spaces.

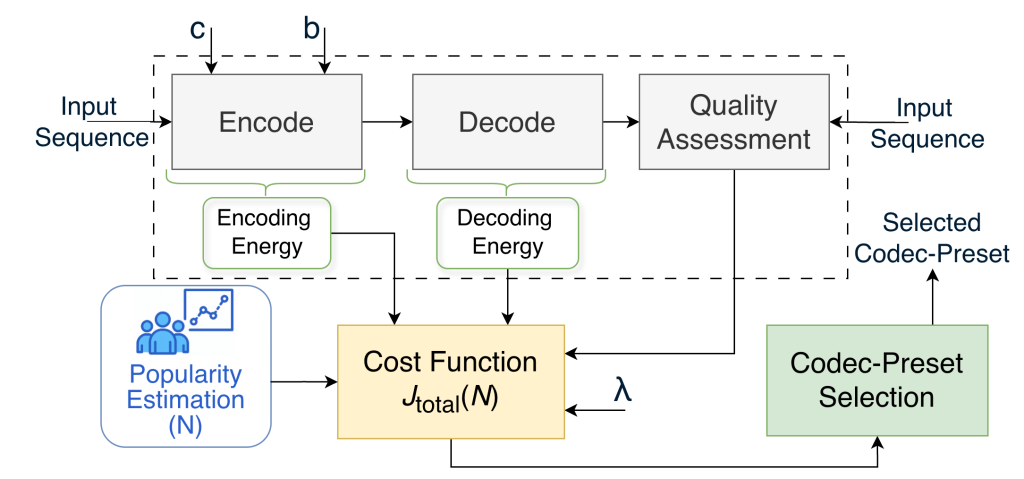

Enhancing Video Quality with Super-Resolution (L27)

Enhancing Video Quality with Super-Resolution (L27)

This station focused on AI-based super-resolution techniques. Attendees could directly compare videos of different quality levels and observe in real time how machine learning reconstructs fine details and textures from low-resolution footage.

Experiencing Multimedia with 3D Interaction (L28)

Using Apple Vision Pro head-mounted displays, visitors explored stereoscopic spatial videos and tested their skills in a 3D dart game. This station highlighted how perception and interaction merge in next-generation multimedia experiences, offering a glimpse into future human-computer interaction.

Making Research Tangible

What made the evening particularly special was not only the technologies themselves but also the way they were communicated: interactive demos, hands-on exploration, and direct conversations with researchers allowed visitors of all ages to engage with science in a meaningful way.

Thank You

A big thank you to everyone who contributed to making this event such a success, through preparation, creativity, and dedication on-site. Events like the Lange Nacht der Forschung thrive on teamwork, and this year was a perfect example.

Enhancing Video Quality with Super-Resolution (L27)

Enhancing Video Quality with Super-Resolution (L27)