Title: Advances in Imaging, Perception, and Reasoning for High-Dimensional Visual Data

Conference: VCIP 2026

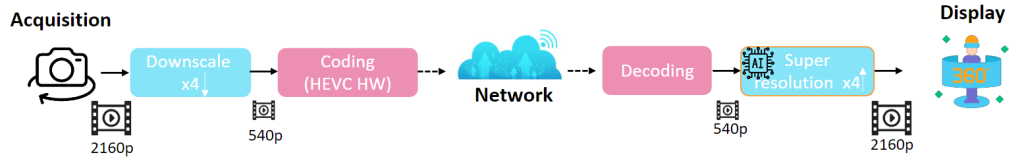

Abstract: Recent advances in visual sensing, computational imaging, neural representations, and multimodal learning are transforming the way visual data are acquired, processed, communicated, and understood. Modern visual systems increasingly rely on high-dimensional visual data that extend beyond conventional RGB images and videos to include event streams, light fields, hyperspectral and polarization imagery, LiDAR, time-of-flight sensing, neural scene representations, 3D Gaussian splats, and hybrid multimodal sensing modalities. These data capture rich spatial, temporal, geometric, spectral, and cross-modal information, enabling more robust visual processing under challenging conditions such as fast motion, low light, occlusion, missing modalities, and distribution shift. At the same time, the growing complexity and volume of high-dimensional visual data create new challenges in acquisition, restoration, compression, representation, quality assessment, perception, and reasoning. Emerging solutions increasingly integrate imaging, communication, perception, and multimodal intelligence to support reliable visual understanding and decision making. In line with these developments, we invite contributions on computational imaging and novel sensing systems, event-based and multimodal vision, high-dimensional visual restoration and enhancement, learned compression, implicit and neural representations, quality assessment, cross-modal fusion and alignment, robust visual perception, vision-language reasoning, trustworthy AI, and efficient visual communication for next-generation visual systems.

ORGANIZERS

- Haowen Bai (Nanyang Technological University, SG)

- Rui Zhao (Nanyang Technological University, SG)

- Zeyu Xiao (National University of Singapore, SG)

- Taewoo Kim (INSAIT, BG)

- Hadi Amirpour (University of Klagenfurt, AT)

- Tae Hyun Kim (Hanyang University, KR)