Faculty of Technical Sciences, University of Klagenfurt nominated Alexander Lercher from ITEC (Radu Prodan‘s group) for Best Performer Award owing to his outstanding performance in studies. He will be conferred with this honor at a public presentation in lecture hall -3 of the University of Klagenfurt on September 16, 2020. In the course of research carried out by the Studies and Examination Department, Alexander was identified as the most successful student in this field of study.

Elsevier’s Journal of Information and Software Technology (INSOF) accepted the manuscript ”A Dynamic Evolutionary Multi-Objective Virtual Machine Placement Heuristic for Cloud Infrastructures”.

Authors: Ennio Torre, Juan J. Durillo (Leibniz Supercomputing Center), Vincenzo de Maio (Vienna University of Technology), Prateek Agrawal (University of Klagenfurt), Shajulin Benedict (Indian Institute of Information Technology), Nishant Saurabh (University of Klagenfurt), Radu Prodan (University of Klagenfurt).

Abstract: Minimizing the resource wastage reduces the energy cost of operating a data center, but may also lead to a considerably high resource over-commitment affecting the Quality of Service (QoS) of the running applications. The effective trade-off between resource wastage and over-commitment is a challenging task in virtualized Clouds and depends on the allocation of virtual machines (VMs) to physical resources. We propose in this paper a multi-objective method for dynamic VM placement, which exploits live migration mechanisms to simultaneously optimize the resource wastage, over-commitment ratio and migration energy. Our optimization algorithm uses a novel evolutionary meta-heuristic based on an island population model to approximate the Pareto optimal set of VM placements with good accuracy and diversity. Simulation results using traces collected from a real Google cluster demonstrate that our method outperforms related approaches by reducing the migration energy by up to 57 % with a QoS increase below 6 %.

Acknowledgements:

This work is supported by:

- European Union’s Horizon 2020 research and innovation programme, grant agreement 825134, “Smart Social Media Ecosytstem in a Blockchain Federated Environment (ARTICONF)”;

- Austrian Science Fund (FWF), grant agreement Y 904 START-Programm 2015, “Runtime Control in Multi Clouds (RUCON)“;

- Austrian Agency for International Cooperation in Education and Research (OeAD-GmbH) and Indian Department of Science and Technology (DST), project number, IN 20/2018, “Energy Aware Workflow Compiler for Future Heterogeneous Systems”.

The manuscript ”Expelliarmus: Semantic-Centric Virtual Machine Image Management in IaaS Clouds” is accepted for publication at the Journal of Parallel and Distributed Computing (JPDC) (https://www.journals.elsevier.com/journal-of-parallel-and-distributed-computing).

Authors: Nishant Saurabh (University of Klagenfurt), Shajulin Benedict (Indian Institute of Information Technology, Kottayam), Jorge G. Barbosa (LIACC, Faculdade de Engenharia da Universidade do Porto), Radu Prodan (University of Klagenfurt).

Abstract: Infrastructure-as-a-service (IaaS) Clouds concurrently accommodate diverse sets of user requests, requiring an efficient strategy for storing and retrieving virtual machine images (VMIs) at a large scale. The VMI storage management require dealing with multiple VMIs, typically in the magnitude of gigabytes, which entails VMI sprawl issues hindering the elastic resource management and provisioning. Nevertheless, existing techniques to facilitate VMI management overlook VMI semantics (i.e at the level of base image and software packages) with either restricted possibility to identify and extract reusable functionalities or with higher VMI publish and retrieval overheads. In this paper, we design, implement and evaluate Expelliarmus, a novel VMI management system that helps to minimize storage, publish and retrieval overheads. To achieve this goal, Expelliarmus incorporates three complementary features. First, it makes use of VMIs modelled as semantic graphs to expedite the similarity computation between multiple VMIs. Second, Expelliarmus provides a semantic aware VMI decomposition and base image selection to extract and store non-redundant base image and software packages. Third, Expelliarmus can also assemble VMIs based on the required software packages upon user request. We evaluate Expelliarmus through a representative set of synthetic Cloud VMIs on the real test-bed. Experimental results show that our semantic-centric approach is able to optimize repository size by 2.3-22 times compared to state-of-the-art systems (e.g. IBM’s Mirage and Hemera) with significant VMI publish and slight retrieval performance improvement.

Acknowledgements:

This work is supported by:

- European Union’s Horizon 2020 research and innovation programme, grant agreement 825134, “Smart Social Media Ecosytstem in a Blockchain Federated Environment (ARTICONF)”;

- Austrian Agency for International Cooperation in Education and Research (OeAD-GmbH) and Indian Department of Science and Technology (DST), project number, IN 20/2018, “Energy Aware Workflow Compiler for Future Heterogeneous Systems”

With the coming of age of virtual/augmented reality and interactive media, numerous definitions, frameworks, and models of immersion have emerged across different fields ranging from computer graphics to literary works. Immersion is oftentimes used interchangeably with presence as both concepts are closely related. However, there are noticeable interdisciplinary differences regarding definitions, scope, and constituents that are required to be addressed so that a coherent understanding of the concepts can be achieved. Such consensus is vital for paving the directionality of the future of immersive media experiences (IMEx) and all related matters. Read more

Authors: Negin Ghamsarian (Alpen-Adria-Universität Klagenfurt), Hadi Amirpour (Alpen-Adria-Universität Klagenfurt), Christian Timmerer (Alpen-Adria-Universität Klagenfurt, Bitmovin), Mario Taschwer (Alpen-Adria-Universität Klagenfurt), and Klaus Schöffmann (Alpen-Adria-Universität Klagenfurt)

Abstract: Recorded cataract surgery videos play a prominent role in training and investigating the surgery, and enhancing the surgical outcomes. Due to storage limitations in hospitals, however, the recorded cataract surgeries are deleted after a short time and this precious source of information cannot be fully utilized. Lowering the quality to reduce the required storage space is not advisable since the degraded visual quality results in the loss of relevant information that limits the usage of these videos. To address this problem, we propose a relevance-based compression technique consisting of two modules: (i) relevance detection, which uses neural networks for semantic segmentation and classification of the videos to detect relevant spatio-temporal information, and (ii) content-adaptive compression, which restricts the amount of distortion applied to the relevant content while allocating less bitrate to irrelevant content. The proposed relevance-based compression framework is implemented considering five scenarios based on the definition of relevant information from the target audience’s perspective. Experimental results demonstrate the capability of the proposed approach in relevance detection. We further show that the proposed approach can achieve high compression efficiency by abstracting substantial redundant information while retaining the high quality of the relevant content.

ACM International Conference on Multimedia 2020, Seattle, United States.

Link: https://2020.acmmm.org

Keywords: Video Coding, Convolutional Neural Networks, HEVC, ROI Detection, Medical Multimedia.

The Sixth IEEE International Conference on Multimedia Big Data (BigMM 2020)

http://bigmm2020.org/

Authors: Anatoliy Zabrovskiy (Alpen-Adria-Universitat Klagenfurt), Prateek Agrawal (Alpen-Adria-Universitat Klagenfurt, Lovely Professional University), Roland Matha (Alpen-Adria-Universitat Klagenfurt), Christian Timmerer (Alpen-Adria-Universitat Klagenfurt, Bitmovin) and Radu Prodan (Alpen-Adria-Universitat Klagenfurt).

Abstract: HTTP Adaptive Streaming of video content is becoming an integral part of the Internet and accounts for the majority of today’s traffic. Although Internet bandwidth is constantly increasing, video compression technology plays an important role and the major challenge is to select and set up multiple video codecs, each with hundreds of transcoding parameters. Additionally, the transcoding speed depends directly on the selected transcoding parameters and the infrastructure used. Predicting transcoding time for multiple transcoding parameters with different codecs and processing units is a challenging task, as it depends on many factors. This paper provides a novel and considerably fast method for transcoding time prediction using video content classification and neural network prediction. Our artificial neural network (ANN) model predicts the transcoding times of video segments for state-of-the-art video codecs based on transcoding parameters and content complexity. We evaluated our method for two video codecs/implementations (AVC/x264 and HEVC/x265) as part of large-scale HTTP Adaptive Streaming services. The ANN model of our method is able to predict the transcoding time by minimizing the mean absolute error (MAE) to 1.37 and 2.67 for x264 and x265 codecs, respectively. For x264, this is an improvement of 22% compared to the state of the art.

Keywords: Transcoding time prediction, adaptive streaming, video transcoding, neural networks, video encoding, video complexity class, MPEG-DASH

Christian Timmerer and Peter Schelkens have been elected as Chairs of the QoMEX Steering Committee and Sebastian Möller has been elected as Treasurer.

The primary goal of the conference is to bring together leading professionals and scientists in multimedia quality and user experience from around the world. QoMEX is a conference taking place annually in early summer and guided by a steering committee.

The 12th International Conference on Quality of Multimedia Experience will be held from May 26th to 28th, 2020 in Athlone, Ireland (online). QoMEX 2020 will provide a warm welcome to leading experts from academia and industry to present and discuss current and future research on multimedia quality, quality of experience (QoE), and user experience (UX).

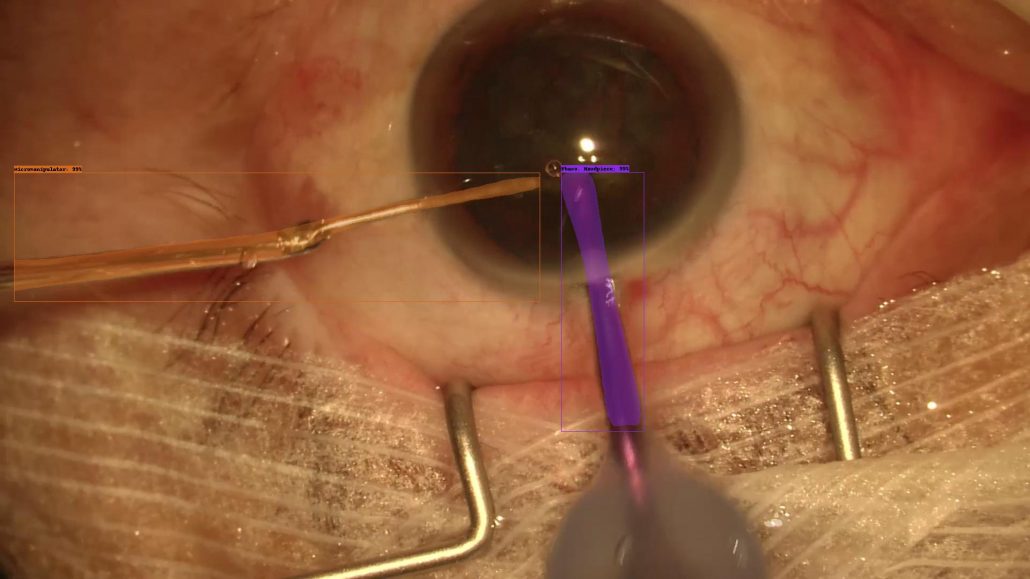

Our Paper “Pixel-Based Tool Segmentation in Cataract Surgery Videos with Mask R-CNN” has been accepted for publication at IEEE 33rd International Symposium on Computer Based Medical Systems (CBMS – http://cbms2020.org).

Authors: Markus Fox, Klaus Schöffmann, Mario Taschwer

Abstract:

Automatically detecting surgical tools in recorded surgery videos is an important building block of further content-based video analysis. In ophthalmology, the results of such methods can support training and teaching of operation techniques and enable investigation of medical research questions on a dataset of recorded surgery videos. While previous methods used frame-based classification techniques to predict the presence of surgical tools — but did not localize them, we apply a recent deep-learning segmentation method (Mask R-CNN) to localize and segment surgical tools used in ophthalmic cataract surgery. We add ground-truth annotations for multi-class instance segmentation to two existing datasets of cataract surgery videos and make resulting datasets publicly available for research purposes. In the absence of comparable results from literature, we tune and evaluate the Mask R-CNN approach on these datasets for instrument segmentation/localization and achieve promising results (61\% mean average precision on 50\% intersection over union for instance segmentation, working even better for bounding box detection or binary segmentation), establishing a reasonable baseline for further research. Moreover, we experiment with common data augmentation techniques and analyze the achieved segmentation performance with respect to each class (instrument), providing evidence for future improvements of this approach.

Acknowledgments:

This work was funded by the FWF Austrian Science Fund under grant P 31486-N31.

Abstract: HTTP adaptive streaming with chunked transfer encoding can offer low-latency streaming without sacrificing the coding efficiency.This allows media segments to be delivered while still being packaged. However, conventional schemes often make widely inaccurate bandwidth measurements due to the presence of idle periods between the chunks and hence this is causing sub-optimal adaptation decisions. To address this issue, we earlier proposed ACTE (ABR for Chunked Transfer Encoding), a bandwidth prediction scheme for low-latency chunked streaming. While ACTE was a significant step forward, in this study we focus on two still remaining open areas, namely (i) quantifying the impact of encoding parameters, including chunk and segment durations, bitrate levels, minimum interval between IDR-frames and frame rate onACTE, and (ii) exploring the impact of video content complexity on ACTE. We thoroughly investigate these questions and report on our findings. We also discuss some additional issues that arise in the context of pursuing very low latency HTTP video streaming.

Authors: Abdelhak Bentaleb (National University of Singapore), Christian Timmerer (Alpen-Adria-Universität Klagenfurt, Bitmovin), Ali C. Begen (Ozyegin University, Networked Media), Roger Zimmermann (National University of Singapore)

Keywords: HAS; ABR; DASH; CMAF; low-latency; HTTP chunked transfer encoding; bandwidth measurement and prediction; RLS; encoding parameters; FFmpeg