Real-Time Quality- and Energy-Aware Bitrate Ladder Construction for Live Video Streaming

IEEE Journal on Emerging and Selected Topics in Circuits and Systems

Mohammad Ghasempour (AAU, Austria), Hadi Amirpour (AAU, Austria), and Christian Timmerer (AAU, Austria)

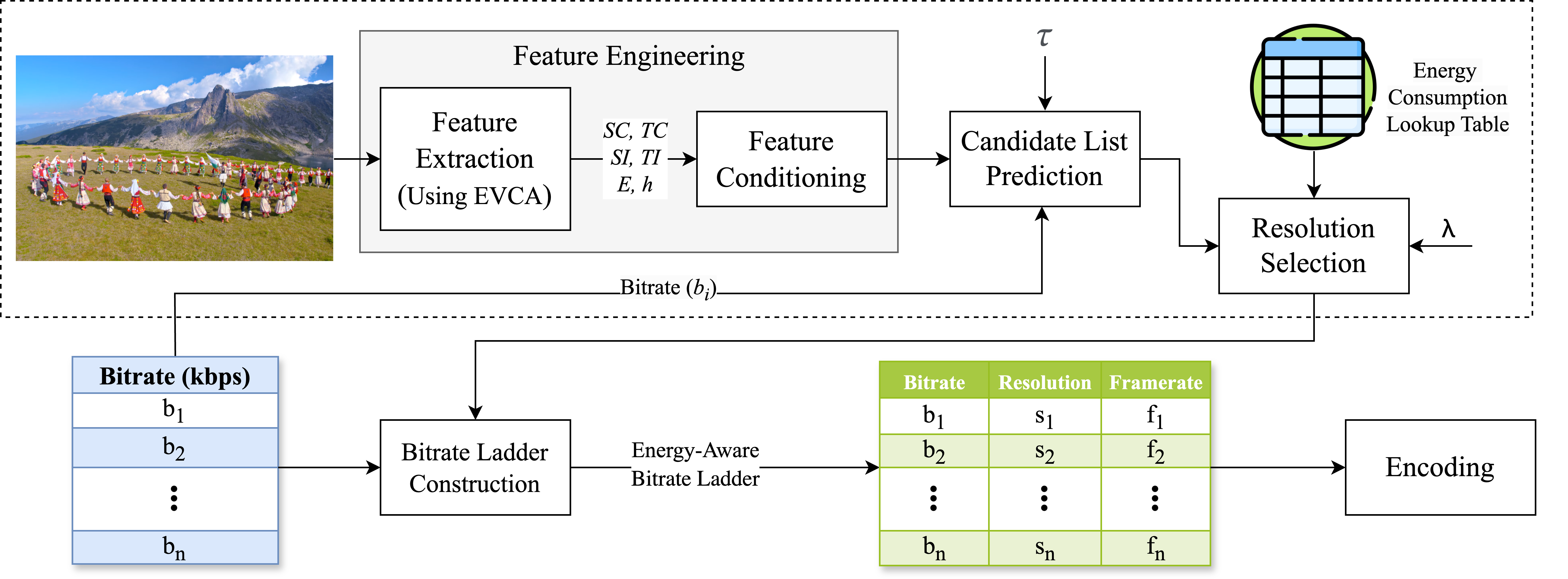

Abstract: Live video streaming’s growing demand for high-quality content has resulted in significant energy consumption, creating challenges for sustainable media delivery. Traditional adaptive video streaming approaches rely on the over-provisioning of resources leading to a fixed bitrate ladder, which is often inefficient for the heterogeneous set of use cases and video content. Although dynamic approaches like per-title encoding optimize the bitrate ladder for each video, they mainly target video-on-demand to avoid latency and fail to address energy consumption. In this paper, we present LiveESTR, a method for building a quality- and energy-aware bitrate ladder for live video streaming. LiveESTR eliminates the need for exhaustive video encoding processes on the server side, ensuring that the bitrate ladder construction process is fast and energy efficient. A lightweight model for multi-label classification, along with a lookup table, is utilized to estimate the optimized resolution-bitrate pair in the bitrate ladder. Furthermore, both spatial and temporal resolutions are supported to achieve high energy savings while preserving compression efficiency. Therefore, a tunable parameter λ and a threshold τ are introduced to balance the trade-off between compression, quality, and energy efficiency. Experimental results show that LiveESTR reduces the encoder and decoder energy consumption by 74.6% and 29.7%, with only a 2.1% increase in Bjøntegaard Delta Rate (BD-Rate) compared to traditional per-title encoding. Furthermore, it is shown that by increasing λ to prioritize video quality, LiveESTR achieves 2.2% better compression efficiency in terms of BD-Rate while still reducing decoder energy consumption by 7.5%.